LOK8: Personalised Location Based Services (PLBS) Research

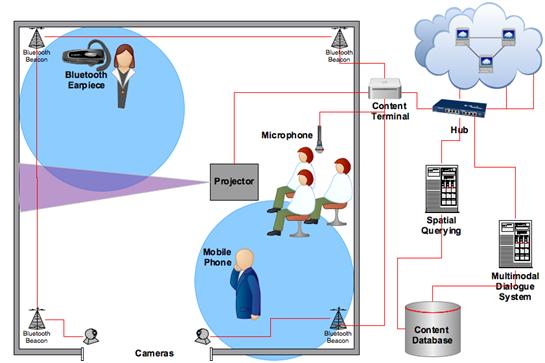

The LOK8 (pronounced locate) project is a joint project between the School of Computing and the Digital Media Centre which is investigating personalised location based services using using mobile devices ranging from auditory user interfaces on Bluetooth earpieces to intelligent avatars equipped with cameras, microphones, speakers, and projectors.

PLBS are an emerging area of core strategic ICT research in DIT, where existing inter-faculty research has led to the formation of a dedicated research team focussed in this area. In LOK8 (locate), PLBS will be investigated using mobile devices ranging from auditory user interfaces on Bluetooth earpieces to intelligent avatars equipped with cameras, microphones, speakers, and projectors. LOK8 consolidates our research team and builds further momentum by constructing a sustainable testing environment and by creating 4 new positions for PhD researchers. The project is highly innovative in its multidisciplinary integration of diverse research fields: location determination and spatial querying; contextual awareness and intelligent artificial systems; and 3D avatars and auditory user interfaces that combined will produce an effective means for location-based, multimodal interaction.

DIT’s research strategic planning exercise has identified ICT as a research priority for the Institute. LOK8 will strengthen strategic connections between researchers in the Faculties of Applied Arts and Science, leading to joint teaching initiatives in both undergraduate and postgraduate programmes across both faculties. Knowledge transfer opportunities are further enhanced by provision for a seminar series and a dedicated international conference on multimodal LBS. New models of researcher skills training and graduate education will be exploited during the project to improve the transfer of research outputs back into the teaching domain. Personalised LBS will continue to attract research funding at national and EU-levels and the team is eager to consolidate their expertise and exploit these opportunities.

LOK8: Mark Dunne’s Role – Avatar

Intelligent 3D Avatars

Objectives

- To investigate the design of intelligent 3D avatars.

- To contribute to an overall multimodal interface.

Description

This work package will begin by performing an overview of the various options available for displaying virtual avatars. The most obvious and widely used approach is to simply use screens mounted throughout the environment. However, this project will go beyond that, in particular investigating novel display methodologies such as spatial augmented reality based displays using moveable projectors, displaying avatars on small mobile devices such as phones and placing video screens on mobile robotic platforms. These more exotic display techniques will allow the creation of more engaging user experiences. Furthermore, a major investigation will take place into how avatars should behave in multi-user environments.

Task 5.1

Develop the architecture for multi-modal believable virtual characters. Building on the knowledge gained from T5.1, this part of the work package will design and develop architecture for the creation of believable virtual characters that will perform cohesive, engaging multi-user interactions across a number of display technologies.

Task 5.2

Development and test of the 3D virtual avatar system in the LOK8 test environment. The final task in this work package will develop a complete 3D virtual avatar solution within the LOK8 test environment.

Deliverables

- Virtual character architecture document (Report and Prototype – M24)

- Final solution in test environment (Report and Prototype – M30)

WP 1

Multimodal Interface Design: The initial design of a multimodal vocabulary will be used as the basis of work in WP5&6 to define the information that must be conveyed in each modality. This vocabulary will also provide WP4 with a specification of the content that can be provided by the LOK8 system and WP7 with the specification of the final multimodal interface to be produced.

WP 5

3D Avatars: This work package will investigate novel display methods such as spatial augmented reality based displays using moveable projectors, displaying avatars on small mobile devices such as phones and placing video screens on mobile robotic platforms. We will also investigate how avatars should behave in multi-user environments.

WP 7

Multimodal Interface Integration: Will focus on the integration of input and output modalities from WP 2, 5 & 6. This will create a user interface with gestural, textual, visual and auditory inputs and outputs based on the specification provided by WP1.

WP 8

System and Interface Testing: Testing will be performed using the LOK8 system. Testing of location and spatial querying will be performed to ensure that context is defined correctly. Analysis of the multimodal dialogue system and its ability to provide contextually aware content will also be undertaken. For interfaces testing, usability and user experience evaluations will be performed.

LOK8: Niels Schütte‘s Role – Contact

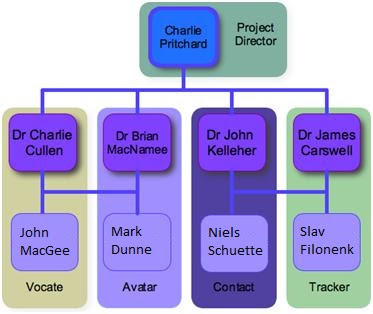

LOK8: Team

Supervisor – Researcher

Dr John D Kelleher – Niels Schütte

Dr Brian Mac Namee – Mark Dunne

Funding Source

HEA Strand III (€395,000)